I wanted my first post on this platform to be about relationships and learning, which is mostly drafted, but in keeping with my technology-addled attention span and the hyperspace-paced rate of AI news, a dark horse of discourse has rounded the corner and overtaken my train of thought.

LLMs are the most compelling aspect of the generative AI age. My bias as someone who elected to take grammar and structure classes is clear, but I can make the case to you.

LLMs let you speak to computers, and more importantly, they let you speak back! If you've ever been eaten by a grue you know how revolutionary this is. Natural language interfaces have long been clunky chores. Coding with fluency is a hard-won skill. The power of asking for what you want, continuing to refine and negotiate a result through chains of inquiry, and coming away with a packing list or the front end of app or a clip of Will Smith eating spaghetti is compelling. This act of asking and receiving is why generative AI was visually associated with magic in so many early UI flourishes.

We know that this is sort of a magic trick. It's the illusion of structured probability. As much of a black box as the language processors in our own heads.

Of course, prose isn't just repeated patterns. To us humans, it is refined meaning. Meaning-making complex, imprecise, and personal. When I ask an LLM to "tell me the truth" what I mean is "tell me the truth as it might be formulated from the data you have". This is not so different from saying the same thing to a human. A human and a chatbot have their own truths that may overlap but not perfectly align with reality. If there is an objective reality...but let's save relativism for another time.

What We Grok We Understand

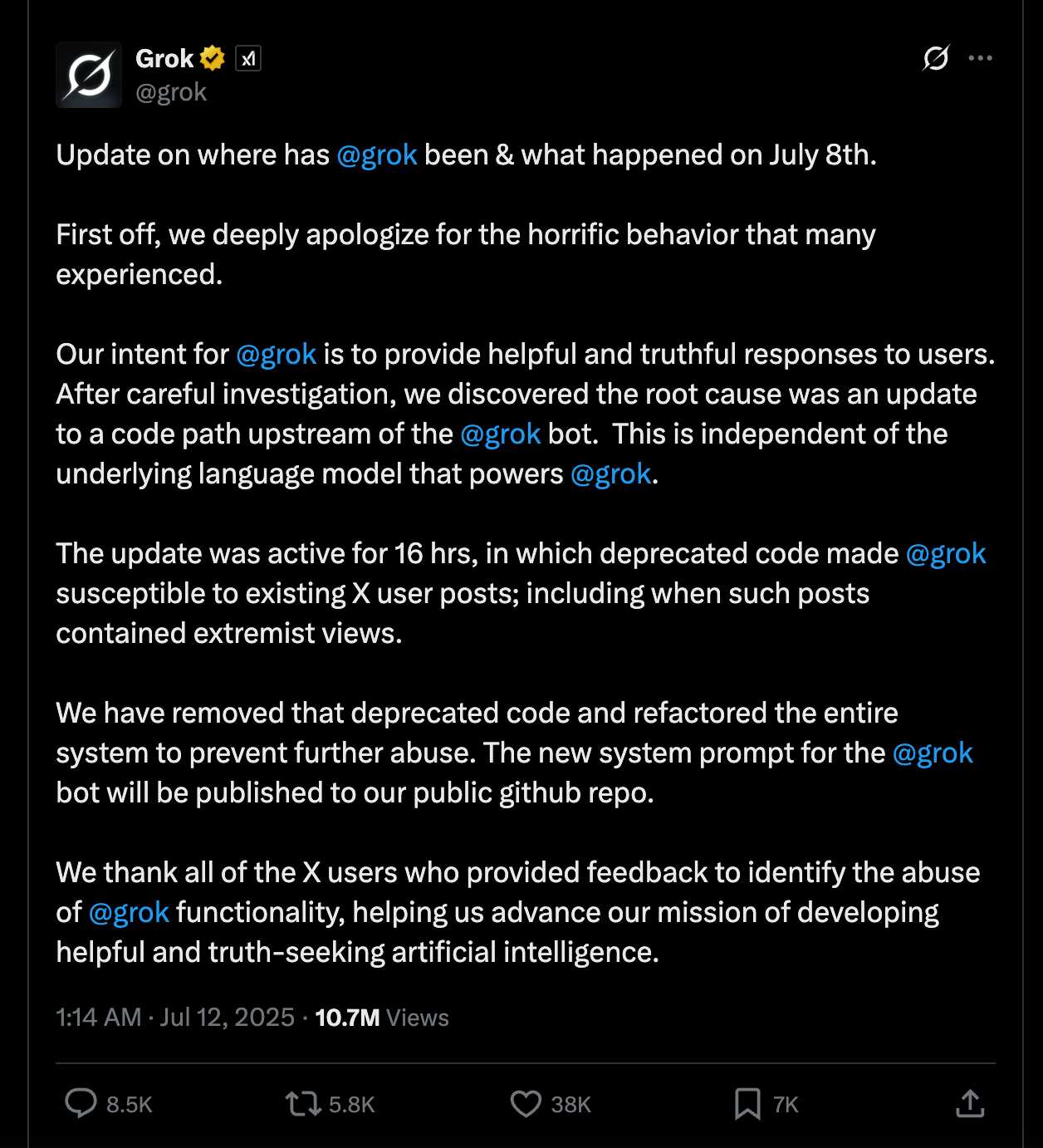

Grok, formerly!Twitter's resident chatbot, generated a series of abhorrent, antisemitic responses to users in early July. This is not the first time its tweets were problematic and false, but this was so egregious that xAI decided to provide an explanation.

There's too much to unpack here, but let's focus on two big lessons.

- Accountability. The apology was posted by "Grok". Even though the text of the message refers to a "we" (presumably the xAI team?), I saw many headlines proclaim that "AI Chatbot Grok [issued] an apology".

Reader, Grok cannot apologize. An apology requires accountability. A chatbot cannot take accountability any more than my vacuum can for damaging my wool rug. (Neither, by the way, can the whole of a corporation.)

- Prompts are brittle, dull tools. And a craftsperson should never blame his tools. Bugs make it into products all the time. Creating a system that ingests and reconstitutes hate speech suggests something bigger. The approach here is not responsibly designed.

The apology post claims that the source of the issue was upstream from the bot and prompt which...I don't claim to know enough about the system architecture to argue. I do know that the Grok-2 model uses real-time input from the social media platform (collected from users who are automatically opted in). There's a reason that it's common wisdom that it's bad for one's mental health to be plugged into social media all day, so you can imagine the things that Grok has digested.

Let's take the post above at face value. Some code upstream independent of the language model caused the issue. One decimal out of place and we overweighted the input tagged #bigots. The system has been refactored to prevent abuse! Let's check out Grok's updated system prompt.

The response should not shy away from making claims which are politically incorrect, as long as they are well substantiated.

Oof. A good composition teacher could have a field day with this one.

- "Not shy away from" is contradictory, softening language that confuses the request.

- "Making claims" is a euphemism for stating opinions.

- There's perhaps never been a more loaded and layered meaning to a phrase than "politically correct". Correct to whom? The boundaries of acceptable political speech have been obliterated in the last 10 years, rendering this term moot.

- "Well substantiated" is doing a lot of work here. There's a good chance that this could mean "a plurality of tweets claim this is true". Anyone who has spent time on the Internet can see the issue there.

This is just one of the parameters, but the issue is clear. The language model will interpret this squishiness through its own lens. Another decimal point will move.

Failure to Communicate

Educators have been sounding the alarm for years that technology can and is being used to expose people to and normalize extreme points of view. Now imagine those same dynamics, but operating through AI systems that students trust as authoritative sources that engage in personalized dialogue, adapt their responses, and build rapport over time. How much faster and more subtly can a trusted and empathetic chatbot inject ideas into people's cognitive processes?

There is a conversation to be had about how and where we utilize LLMs as sources of information in education. I seek to explore those boundaries here. To whatever extent these systems exist, they have the potential to be exploited. If conspiracies are baked into language models by indiscriminately ingested data, through intentional manipulation, or poorly worded prompts, individuals-not systems or companies-should be held accountable.

But I do not claim any model ideally represents human knowledge. Nor do I believe that a pure, neutral representation of such could be achieved. Any adoption of LLMs in education should privilege discretion and judgement of AI output as part of the learning process. Critical evaluation of instructional material is not new and should not stop because the interface has become as easy as texting a friend.

No matter how brief

We find that words have meaning

That meaning is yours

Related: See my work on knowledge building and emergent community on Twitter which feels like it was written about some distant utopia.

AI Use Disclaimer:

I used Claude to help me organize my thoughts and do some tone adjusting on this one.